🐲 About Xulong

Hello! I’m Xulong Tang, you can call me Ben!

I am a third-year PhD student in the Department of Computer Science at The University of Texas at Dallas(UTD). My research is primarily advised by Professor Rawan Alghofaili and co-advised by Professor Balakrishnan Prabhakaran.

Research Interests:

- 3D Human Dance Motion Generation

- Extended Reality (XR), including Virtual Reality, Augmented Reality, and Mixed Reality

- Computer Vision

- Multimedia Retrieval

- Multimodal Learning

In addition to my research, I am also a game developer:

I have used Unity to create a VR educational game for teaching children about the universe at the Sci-Tech Discovery Center.

I have also contributed to the development of an unreleased third-person survival shooter game using Unreal Engine and C++, where I was responsible for gameplay and user interface.

🔥 News

- 2026.03: 🎉🎉 One paper was conditionally accepted at SIGGRAPH 2026.

- 2026.03: 🎉🎉 One paper was accepted at CVPR Workshop 2026.

- 2026.01: 🎉🎉 One paper was accepted at IEEE VR 2026.

- 2025.09: 🎉🎉 One paper was accepted at NeurIPS 2025.

- 2025.07: 🎉🎉 One paper was accepted at ACM Multimedia 2025.

- 2024.06: 🎉🎉 Two papers were accepted at ICMR 2024.

- 2024.01: 🎉🎉 Started my PhD at The University of Texas at Dallas.

📝 Publications

* denotes equal contribution.

IEEE VR 2026

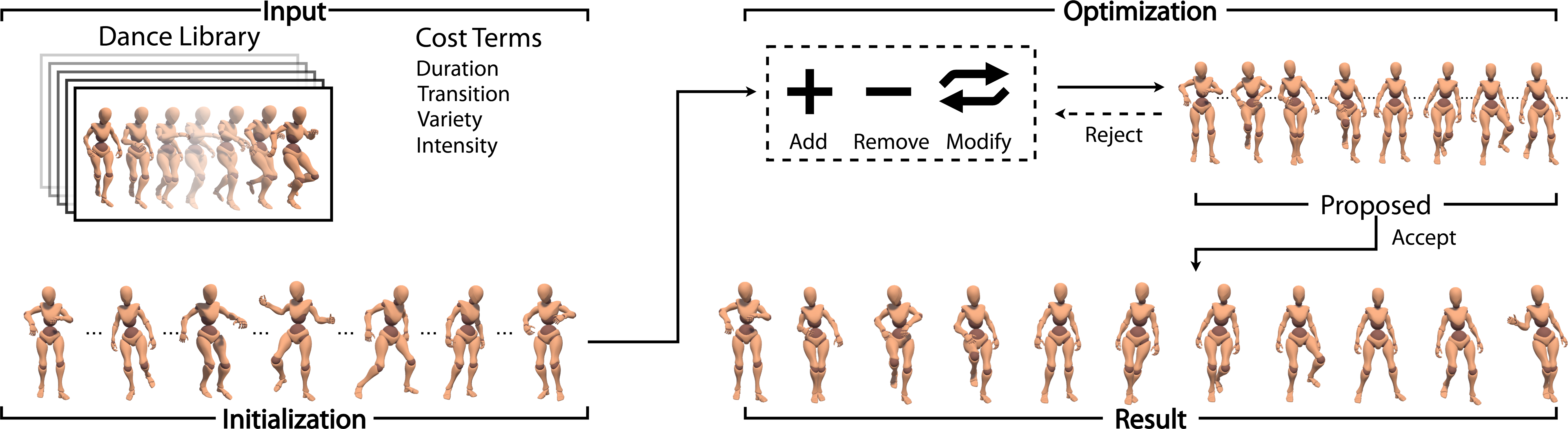

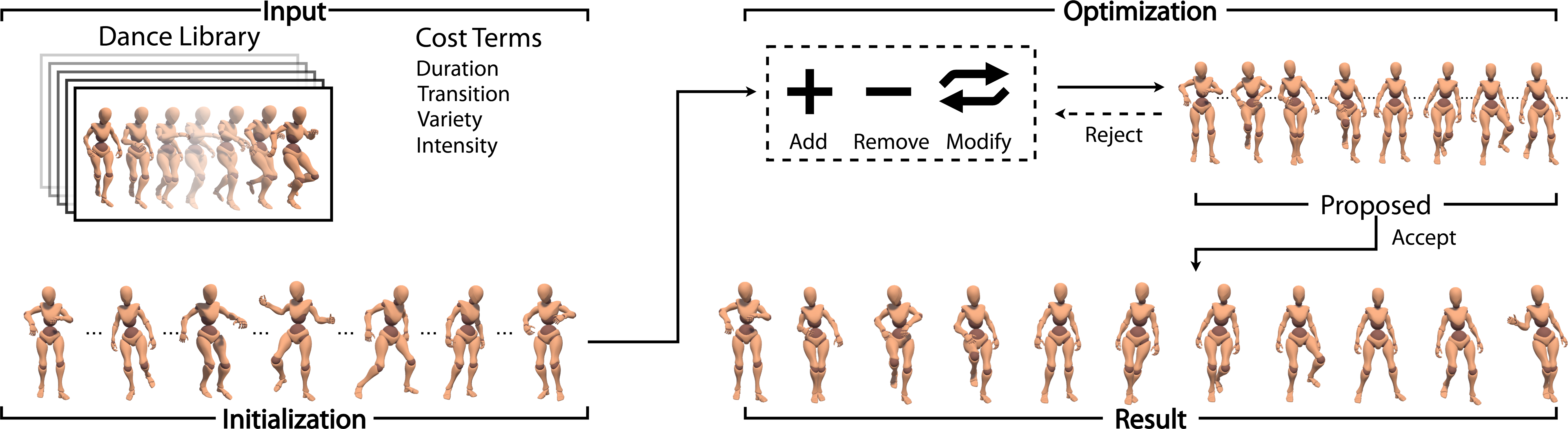

Personalized Dance Synthesis Based on Physical and Cognitive Intensities

CVPR Workshop 2026

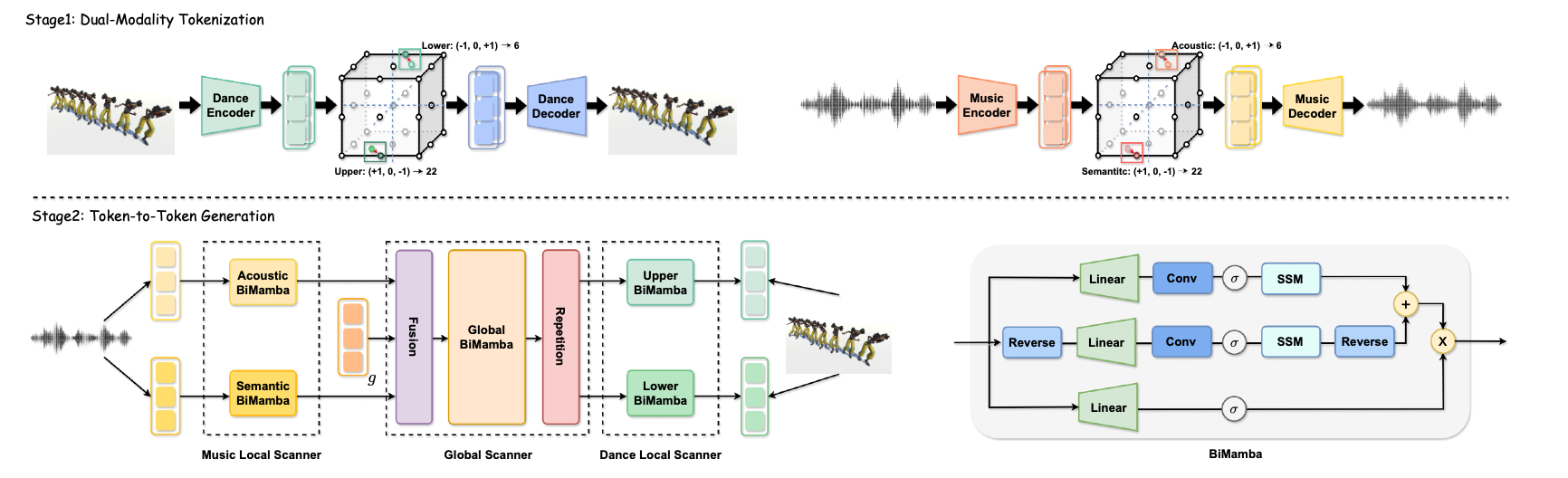

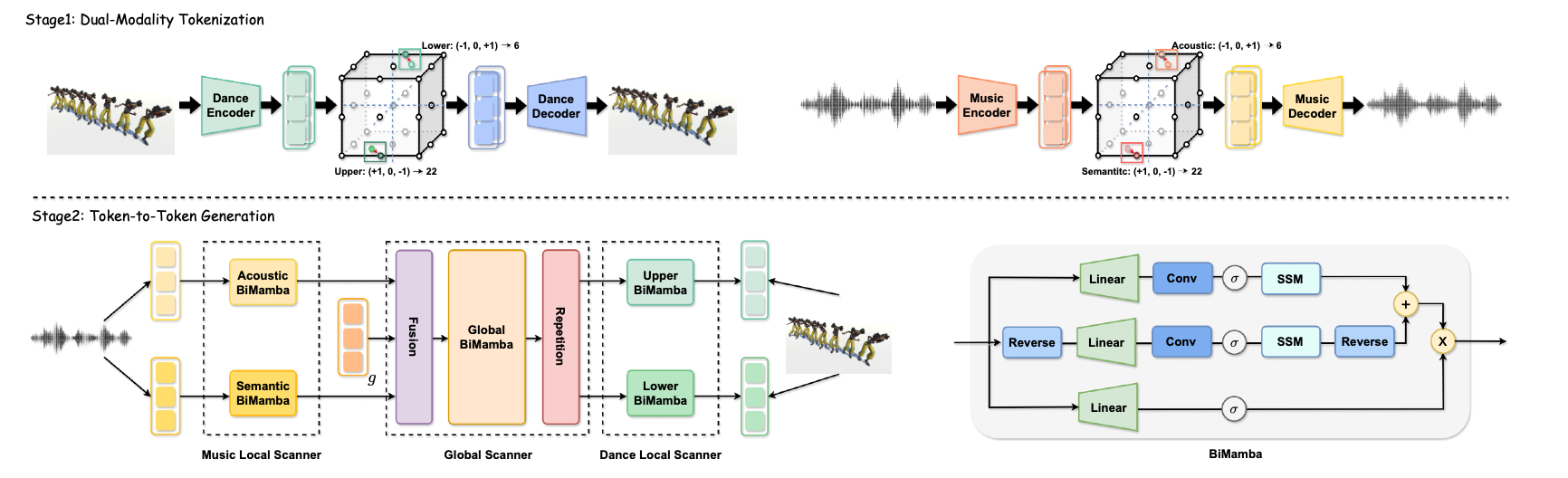

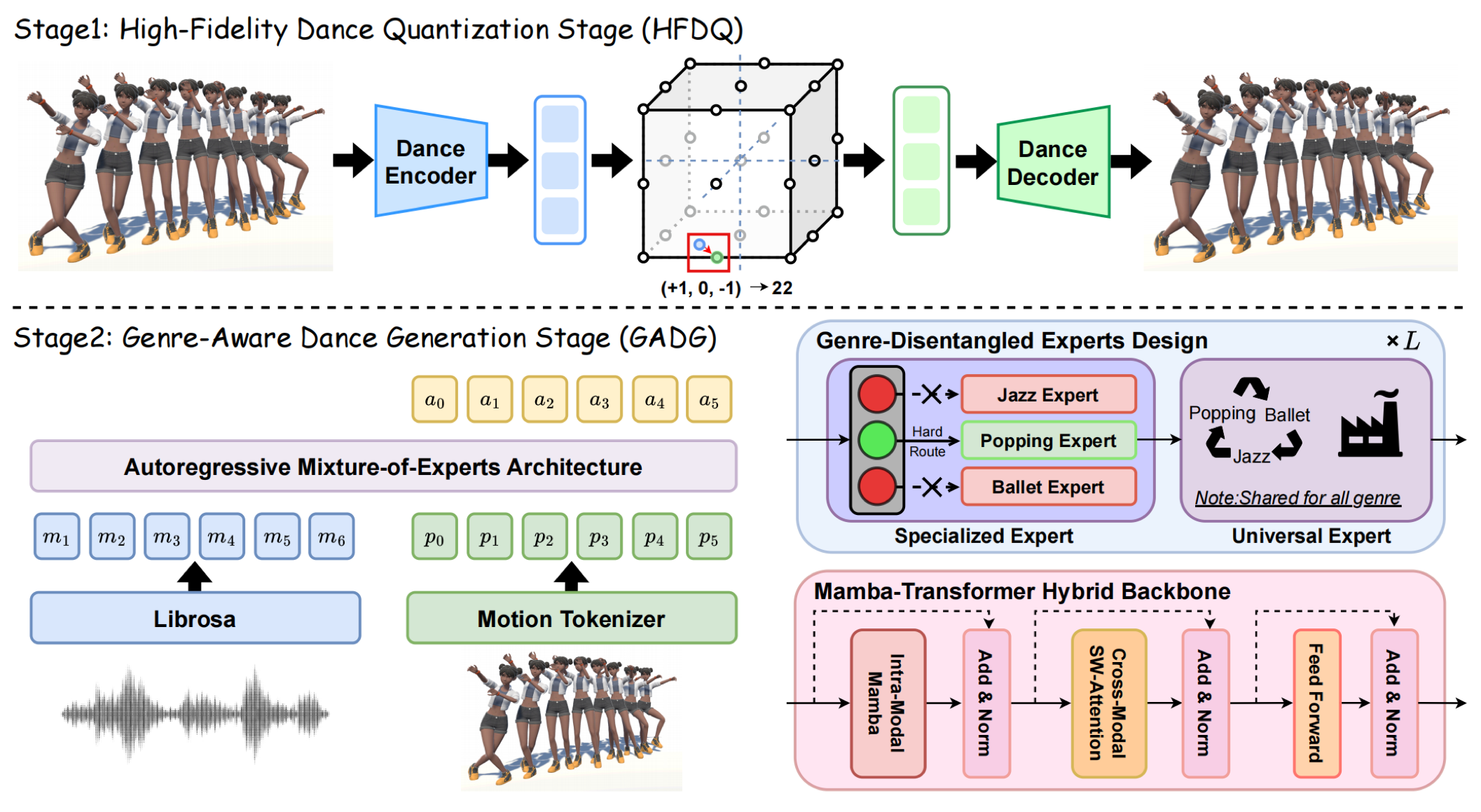

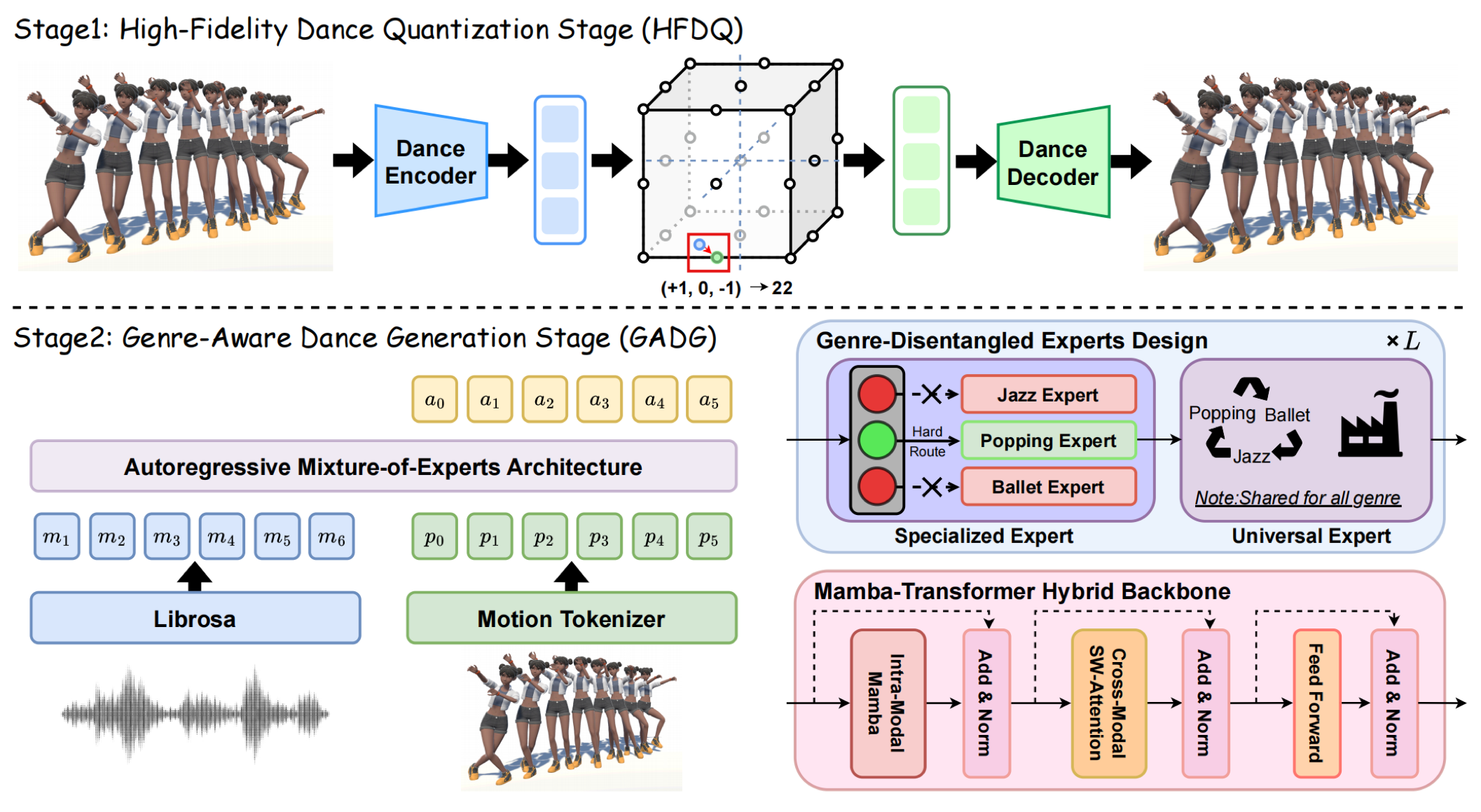

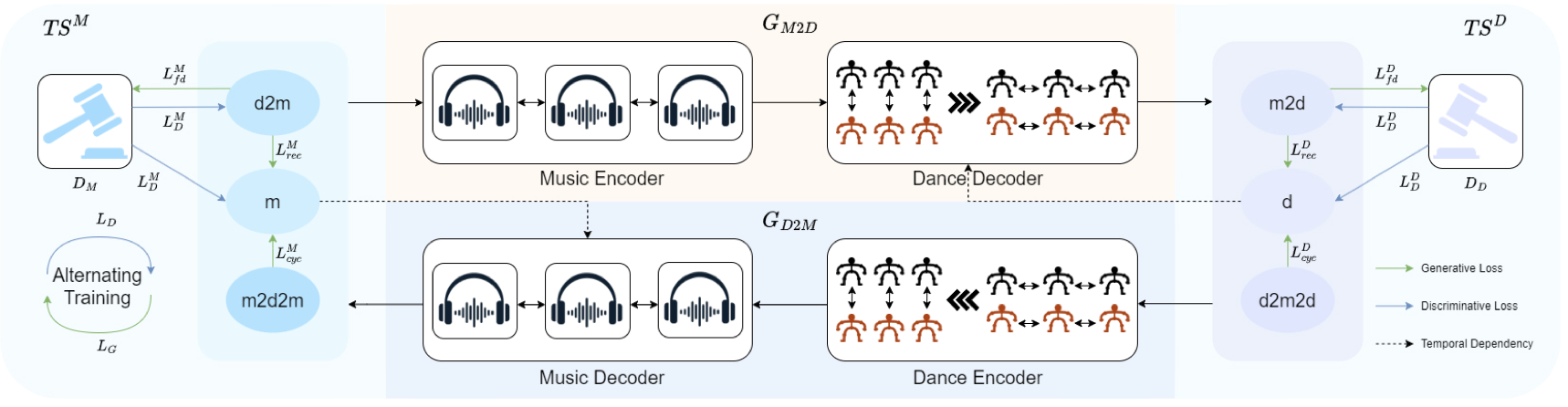

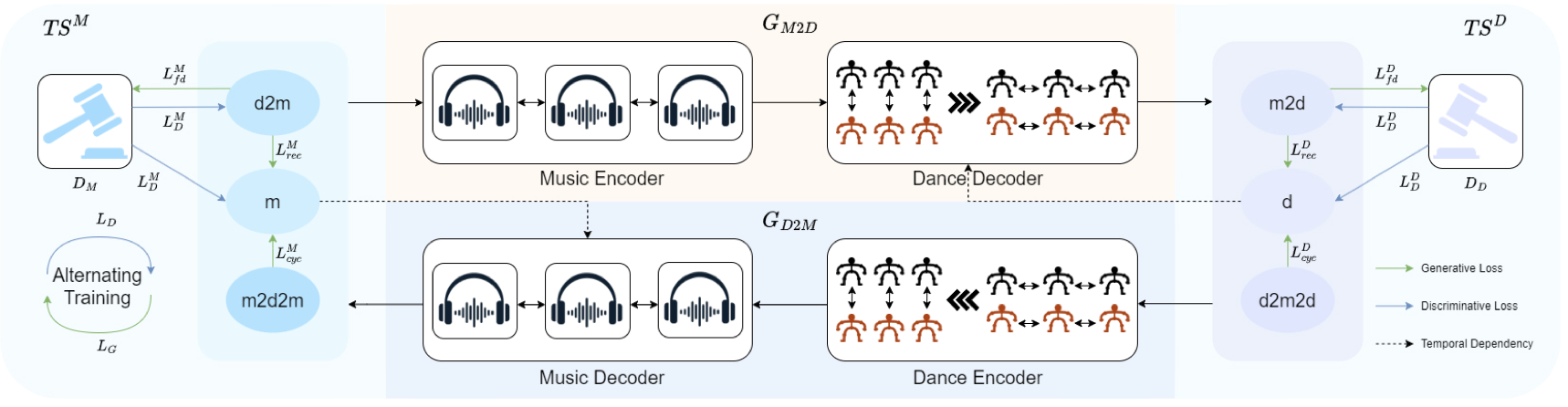

TokenDance: Token-to-Token Music-to-Dance Generation with Bidirectional Mamba

NeurIPS 2025

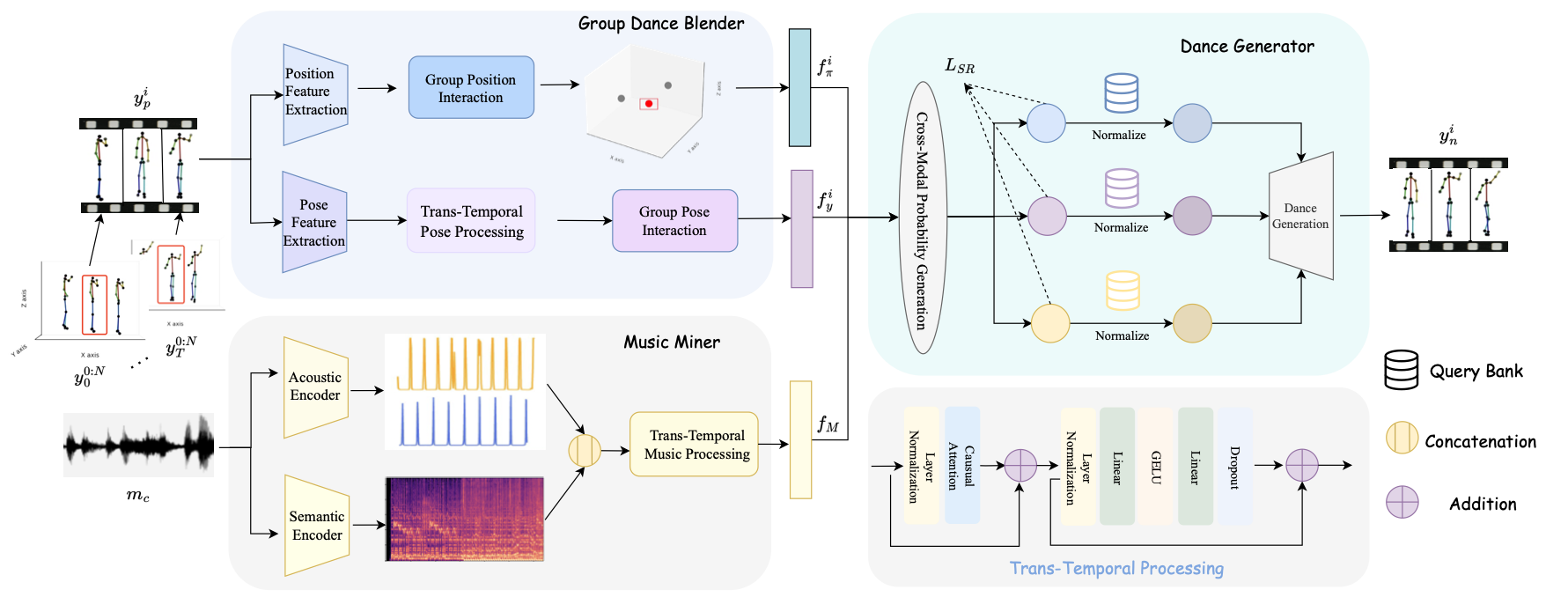

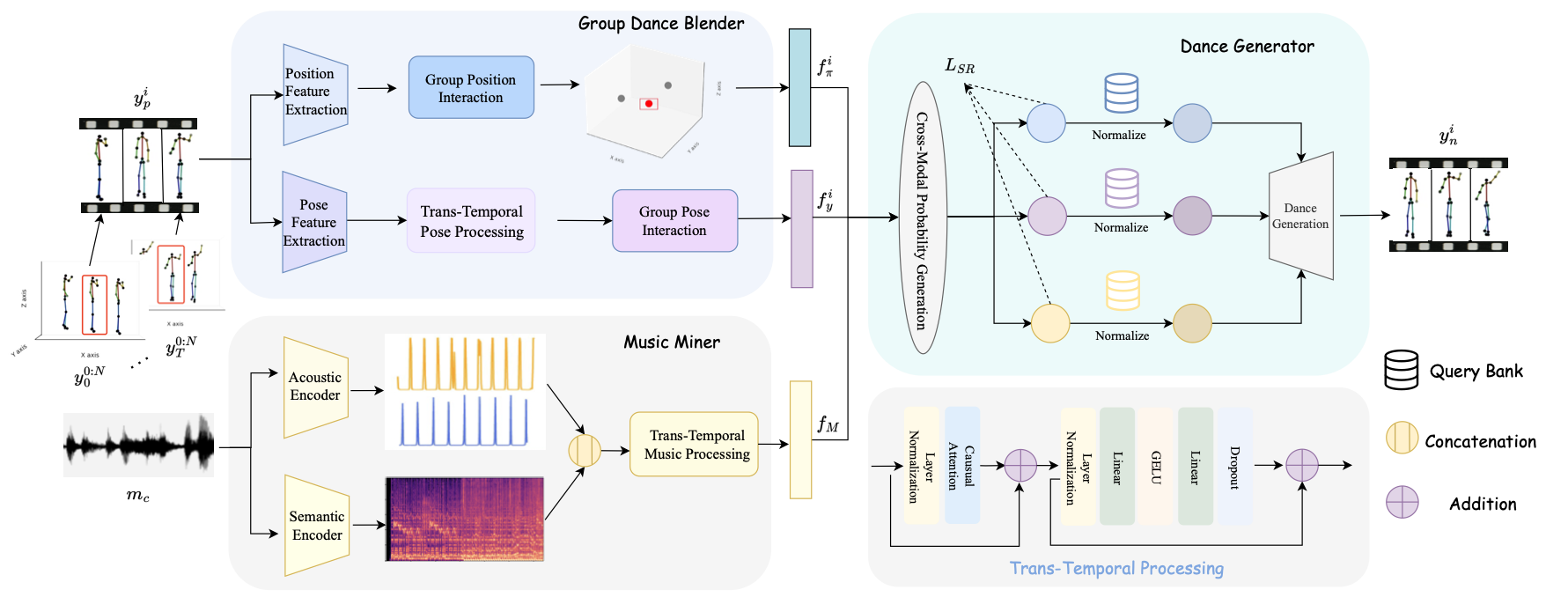

ACM MM 2025

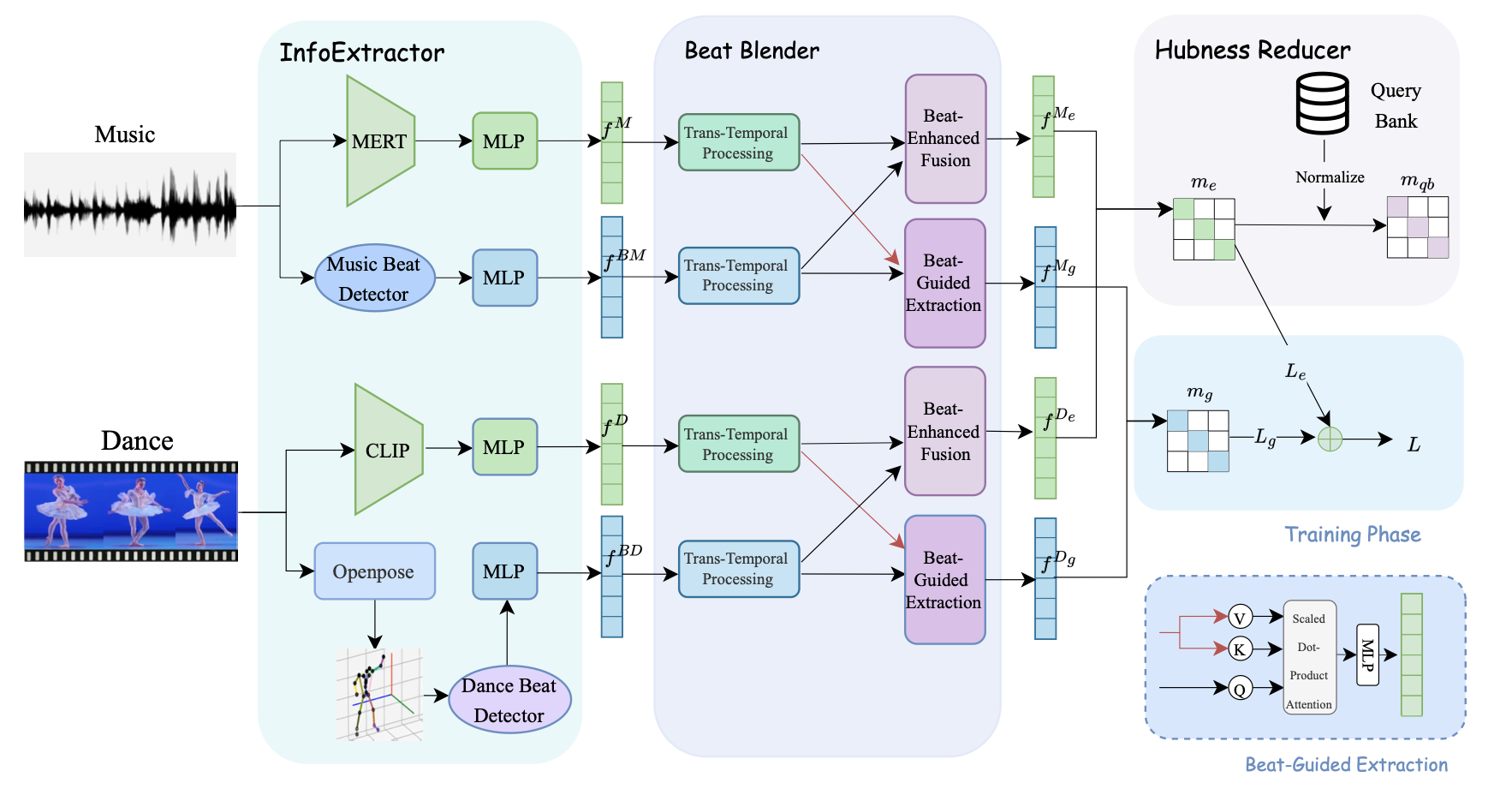

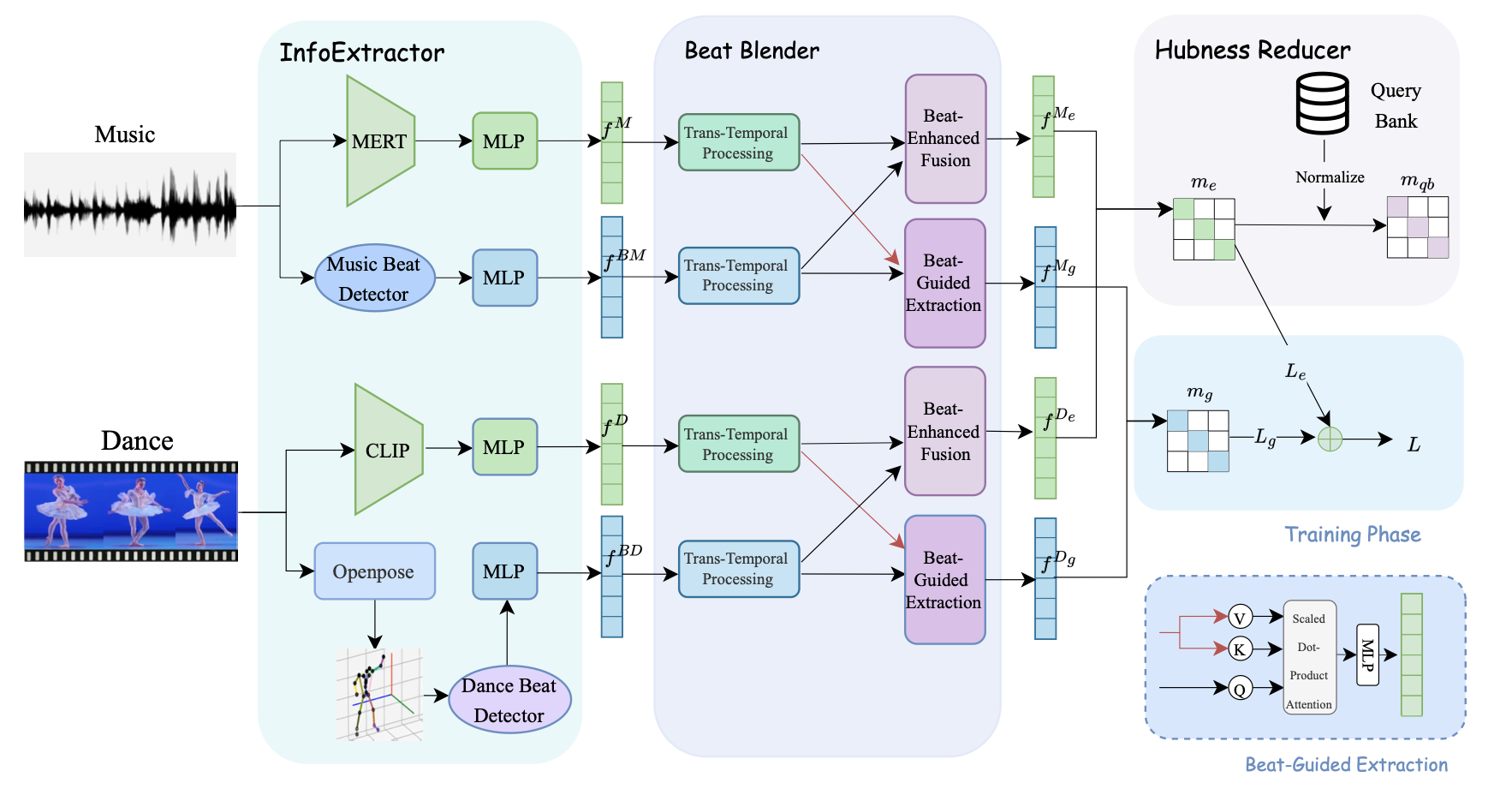

ICMR 2024

ICMR 2024

📖 Education

- 2024.01 - present, PhD in Computer Science, The University of Texas at Dallas, Richardson, Texas

- 2023.09 - 2024.01, Master of Computer Science, The University of Texas at Dallas, Richardson, Texas

- 2018.09 - 2022.06, Undergraduate, Xidian University, Xi’an, China

💼 Professional Experience

Reviewer

- CVPR (2025–2026)

- ECCV (2026)

- IEEE Transactions on Computers (2024–2025)

- IEEE Virtual Reality (2024–2025)

Instructor

- Augmented Reality 3D User Interface Design Workshop (2024)

- Human-Centered eXtended Reality Lab (2024)

Teaching Assistant

- CS4332: Introduction to Programming Video Games (2025)

- CS6331: Human-Computer Interaction (2024)

💻 Internships

- 2021.12 - 2022.06, Unreal Engine Developer, Shengqu Games, Shanghai, China